All News

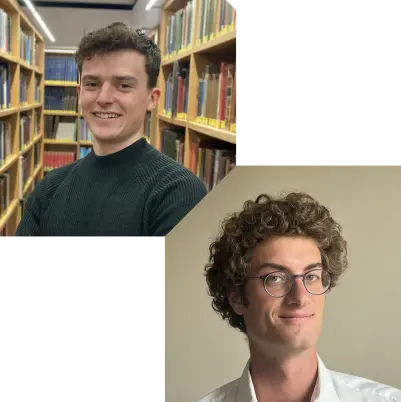

Benjamin and Ioannis recently started their PhD journey!

Benjamin decided to continue with us after a student project and a succesful internship.

Ioannis is freshly arrived at ETH after completing his Master’s degree from the National Technical University of Athens.

We are thrilled they chose to stay with NSG for a few more years.

Welcome!

Hard to believe that it has been 10 years already! We managed to celebrate the milestone during an action-packed afternoon/evening with almost all alumnis, which now span over three generations of Ph.D. students! This, of course, called for a great group picture.

Check out my intro slides for the event:

Our group welcomes Ayush as new team member. Ayush earned his PhD from NUS under the supervision of Ben Leong and now joins us for his post-doc, focusing on creative solutions to the problems induced by CCA heterogeneity on the Internet. Welcome!

We have plenty of interesting MsC-level projects offered in collaboration with NetFabric, our startup active in the field of network observability. Check them out here!

Continuing the tradition: here’s our 2024 group activity report.

2024 has been incredibly diverse and full of milestones: from teaching a 2-week course at Ashesi University in Ghana to launching NetFabric, our first startup which already employs 7 amazing team members! Meanwhile though, our research has not taken a back seat—we even won several awards—and neither have our teaching activities.

Dive into the reportOur group welcomes Sushovan as new team member. Sushovan earned his PhD from Rice University under the supervision of Eugene Ng and now joins us for his post-doc, focusing on energy-efficient optical network architectures. Welcome!

Our group welcomes Weiran as new team member.

Before starting as a PhD student, Weiran earned his master on Cloud and Network Infrastructures from KTH and worked for some time at Ericsson as a software developer. He will be the first student focusing primarily on sustainable networking projects.

Welcome!

Jean and Pietro just started! Pietro did multiple projects with us in the past and Jean just finished a succesful summer internship with us. We are thrilled they chose to stay with NSG for a few more years.

Welcome!

I am thrilled to share that we’ve just co-founded NetFabric.ai, the first start-up to come out of our research group! NetFabric is a next-gen network observability platform based on the latest advances in generative AI and mathematical modeling. Our aim? To be the first platform that can provide real-time answers to any networking question. The core founding team comprises our own Tobias Bühler alongside with members of the Secure, Reliable, and Intelligent Systems Lab, including Benjamin Bichsel and Prof. Martin Vechev.

You can expect more updates very soon, including about upcoming student projects in partnership with the startup.

Our paper on quantifiying link sleeping in ISP networks won the Best Paper Award at HotCarbon ‘24.

This is a very nice achievement for our starting research in Sustainable Networking. Congratulations!

Edgar and Albert recently defended their doctoral thesis. Congratulations! We will be sad to see them leave the group, but other people deserve to enjoy their company and skills too :-)

Our group welcomes Lukas and Valerio as new team members. Both are “home-grown” students who did multiple projects with us in the past, and we are thrilled they chose to stay with NSG for a few more years.

Welcome!

Our paper on routing attacks against cryptocurrency mining pools has been accepted at IEEE S&P 2024. This marks our return to S&P after a 7-year hiatus. Stay tuned for more updates as we gear up for San Francisco!

2023 was a relatively balanced year for us. Check out our activity report to get a glimpse at what we have been up to and what is in the pipeline in 2024.

Our group welcomes Laurin as new team member. Before starting as a PhD student, Laurin did his master in computer sciences at ETH and worked on serverless architectures and associative caches, amongst others. Welcome!

A couple of weeks ago I gave a talk at the Google Networking Summit on some possible applications of machine learning to networking problems. The talk looked in turn at: (i) what kind of models we should learn (hint: transformers-based models); (ii) how we can get our hands on network data to train these models (hint: leveraging big code!); and (iii) how much networking knowledge do large-language models have nowadays (hint: they’re pretty good, actually). You can find the slides here.

Our paper “QVISOR: Virtualizing Packet Scheduling Policies” has been accepted at ACM HotNets 2023! In this work, we ask ourselves: is it possible to simultaneously deploy multiple scheduling algorithms on existing commodity switches? Take a look at the pre-print to find out!

We finally have a new group website with a new design. There is now a full-text search for the publication list (which has grown so large that it is difficult to find a specific publication). And, of course, there is a dark-mode!

Happy to report that our group will again be represented at SIGCOMM this year! Our paper on seamless network configurations, the first avoiding both permanent and transient violations, has just been accepted. As usual, stay tuned for the details! New York City, here we come :-)

Our group welcomes Theo as new team member. Before starting as a PhD student, Theo did his master thesis on routing attacks in our group which resulted in a publication at EuroS&P. Check it out!

2022 was one of our best year ever, on many accounts. Check out our activity report to see what our group has been up to and what is in the tank for us for 2023.

Our paper “Reducing P4 Language’s Voluminosity using Higher-Level Constructs” has been accepted at EuroP4 2022! In this paper, we present O4, an extension of P4, that incorporates three higher-level constructs (arrays, loops, and factories) to reduce the voluminosity of P4 code.

Our paper entitled “Learning to Configure Computer Networks with Neural Algorithmic Reasoning” was accepted at NeurIPS 2022! In this paper, we explain how we can approximate routing computations using neural networks. Among others, doing so allows us to efficiently “invert” these computations enabling to automatically synthesize configurations from their intended output. This synthesis problem is known to be hard: actually, our recent ICNP 2022 paper shows that many instances of that problem are NP-hard/NP-complete. Having a away to approximate these computations allows us to “break” the inherent scalability barrier of solving these problems, at the price of accuracy. How to deal with this accuracy loss is amongst the many next questions we want to look at. Stay tuned!

Our group will have two papers at this upcoming ACM HotNets workshop! These two papers will mark our 10th and 11th HotNets papers since 2014.

Stay tuned to learn more about:

- How we plan to build the next-generation of network traffic generator by leveraging millions of code repositories hosted on code-sharing platforms such as GitHub;

and

- How we intend to build generalizable machine learning (ML) models for predicting network traffic dynamics using the Transformer architecture.

As usual, you’ll find the final version of the papers on our publications page in a couple of weeks.